Here comes the AI...

Issue 14: Why Australia needs data centres — and why they must become a core part of the electricity system

After twenty years of flat demand, AI data centres are bringing massive load growth back to Australia's electricity grid. The question is not whether we can handle it, but whether we're smart enough to shape it.

For much of the past two decades, Australia’s electricity system has been defined by an uncomfortable pairing. Network costs have continued to rise, while the volume of energy flowing through the grid has remained broadly flat. Efficiency gains, industrial change and, above all, rooftop solar have suppressed net demand even as the physical system expanded.

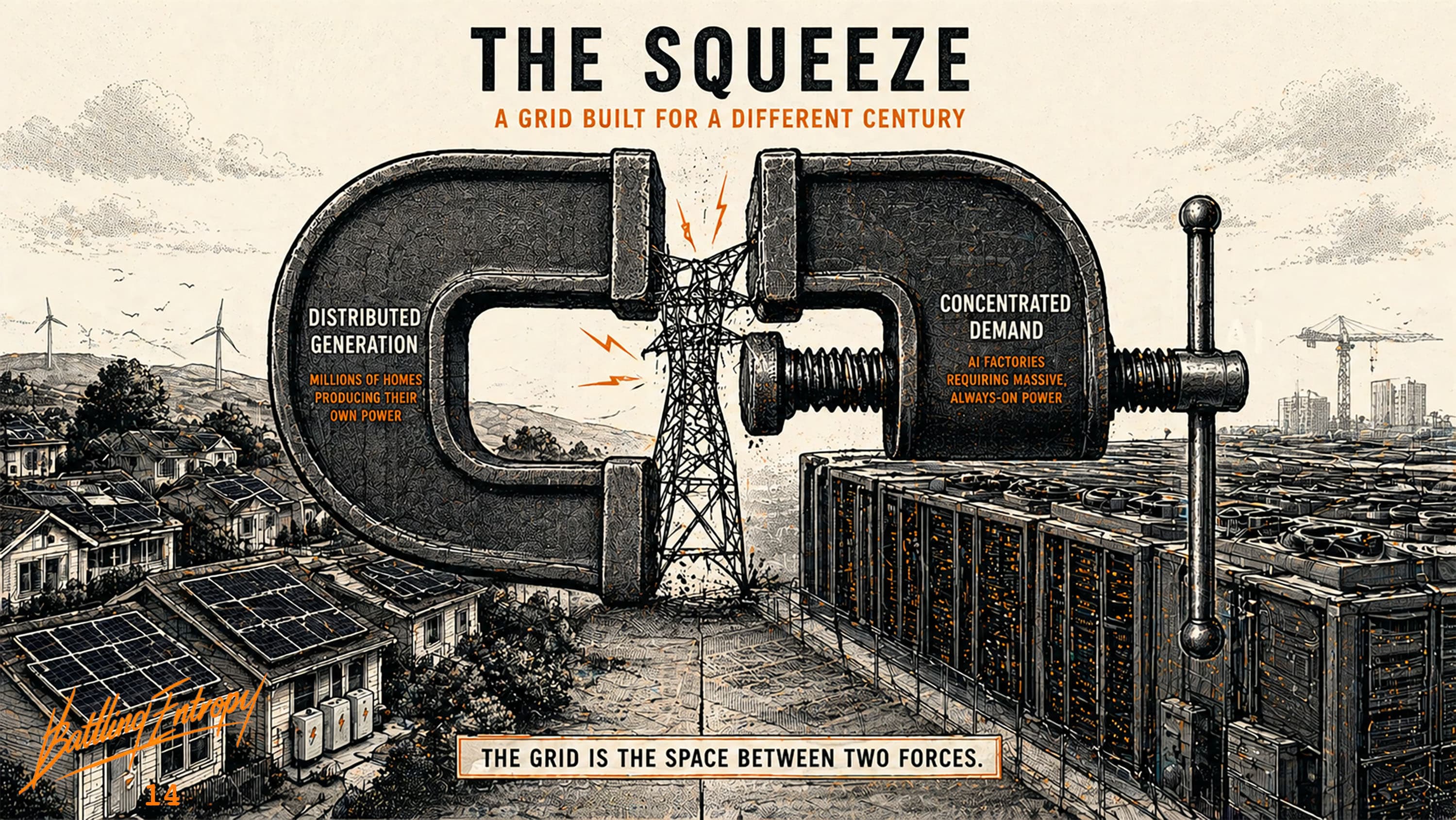

The result, as explored in The Grid Squeeze, is a system carrying more infrastructure across a largely unchanged base of consumption. That dynamic is not explosive. It is slower and more insidious. Rising capital spread across fewer useful kilowatt-hours, increasingly burdening consumers with costs.

Into that world comes artificial intelligence: not just as software, but as load.

The scale of that load is not settled. Forecasts vary widely, and recent improvements in model efficiency suggest that the most aggressive projections may not be realised. What is clear, however, is that computing has become materially more energy-intensive than in the past. Even conservative scenarios point to a new class of demand that is large, concentrated and economically significant.

Demand growth, long absent, has returned. The question is not whether it happens, but how it lands.

The grid we built, and the one we are getting

The existing electricity system was designed for a simple structure: central generation, passive demand, predictable peaks. Power flowed from large plants through transmission and distribution networks to customers.

Rooftop solar has disrupted the model. Millions of households now generate electricity. Increasingly, with batteries, they also store and manage it. On many days, a detached house can meet most of its own needs locally. The grid is still essential, but its role is shifting. It is becoming less a daily delivery mechanism and more a form of insurance — something relied upon during extended bad weather, evening peaks, or unexpected surges in demand.

That shift creates a quiet but significant tension. If some households draw less from the system, the fixed costs of maintaining it fall more heavily on those who cannot. Renters, apartment dwellers and those without suitable roofs remain fully exposed. The network is still required for everyone, but its utilisation becomes uneven. This is not just an engineering issue. It is an equity problem.

At the same time, the system is about to absorb a very different kind of load.

The modern data centre is different

For most of the internet era, data centres were retrieval engines. They located stored information and returned it. The energy required for that task, while not trivial, was modest relative to the industrial system that supported it.

That is no longer the case. Modern computing increasingly performs interpretation, generation and reasoning. As Jensen Huang has observed, computing has shifted from a retrieval-based model to a generative one. That framing is useful, but the underlying shift does not rest on his advocacy. The reality is visible across the industry: workloads are becoming far more computationally intensive.

The consequence is straightforward. The modern data centre is not simply a repository of information. It is a production system. It takes electricity and converts it into tokens, inference and decision-making.

The old internet moved information. AI manufactures it. That distinction really matters, because manufacturing has always been energy-intensive.

The load is new, and so is its shape

It would be a mistake to think of AI demand as simply “more electricity”. It is not just an increase in volume. It is a change in form.

Households are becoming lighter users of the grid, at least in net terms, for significant parts of the day. Data centres are becoming heavier users, often in concentrated locations. The system is being pulled in two directions at once: distributed supply at the edge, concentrated demand at specific nodes.

This is the structural mismatch captured in The NEM 101: the market prices energy at a regional level, but the physical system operates locally. The mismatch between those two layers becomes more visible as both distributed generation and concentrated demand increase.

Geography: a system beginning to split

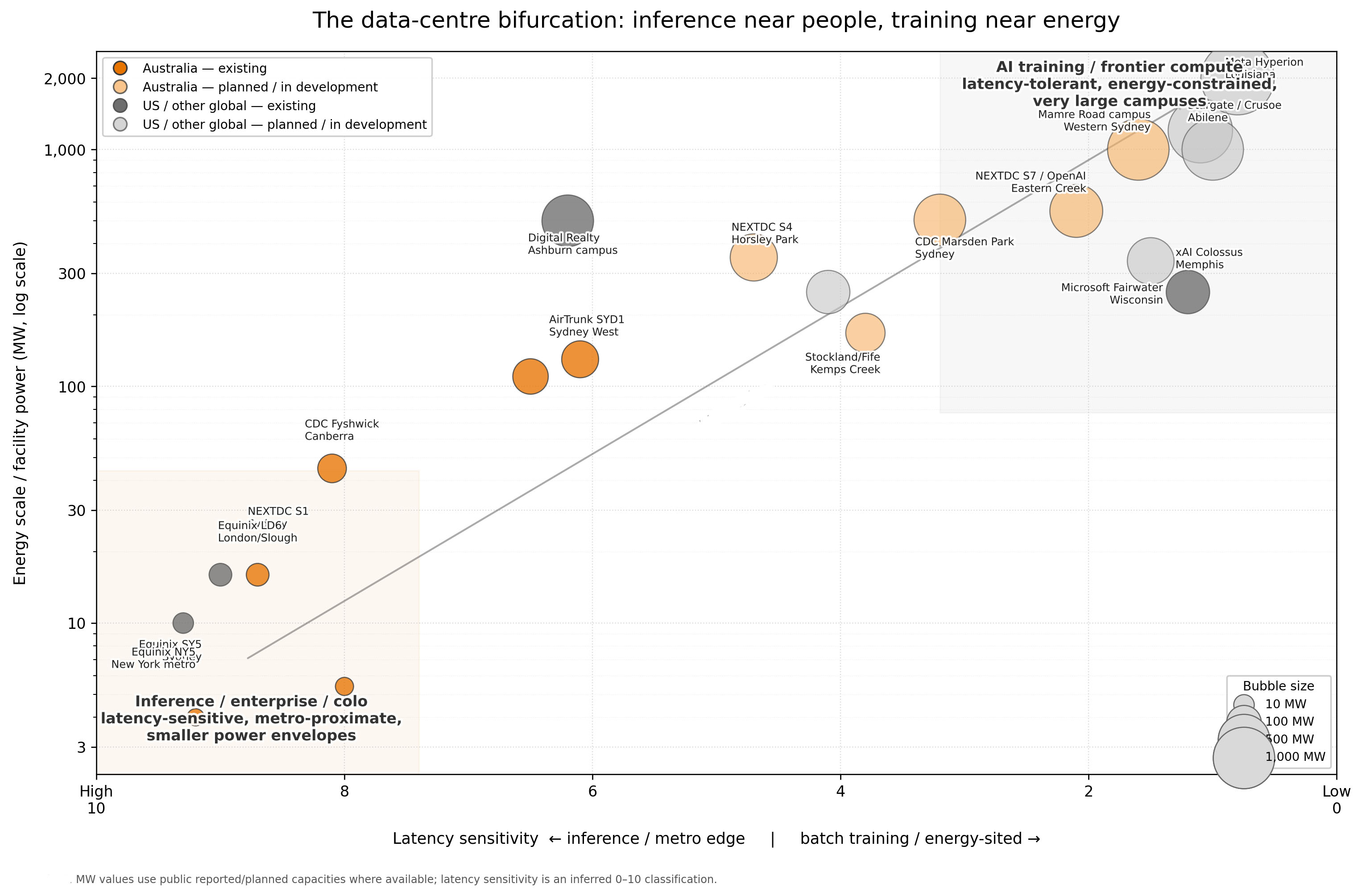

There is an apparent contradiction in how data centres are described. They are often said to cluster in major cities, where connectivity, customers and existing infrastructure are strongest. That is true. Sydney and Melbourne remain dominant hubs for enterprise, government and low-latency computing.

At the same time, the largest and most energy-intensive workloads are already migrating elsewhere. Hyperscale facilities have been moving toward regions with cheaper land and power for several years. The system is not concentrating or decentralising. It is bifurcating.

Latency-sensitive workloads: real-time inference, finance, healthcare, defence; remain close to users. Bulk computation: training, batch inference, rendering, synthetic data; is more mobile.

This distinction matters because it changes how the grid should evolve. It is often easier to move data than electricity. Fibre networks are not costless, but they are lighter and more scalable than transmission infrastructure. Where latency allows, computation can move toward energy rather than forcing energy toward computation.

The implication is simple: Put inference near people. Put training near energy. Australia’s future data-centre footprint should reflect that logic, rather than defaulting to a purely metropolitan model. The emerging shape of the system is not theoretical. It is already visible.

The industry is splitting. Latency-sensitive workloads cluster near cities. Energy-intensive training migrates toward power.

The industry is splitting. Latency-sensitive workloads cluster near cities. Energy-intensive training migrates toward power.

The flexibility question

Much of the policy optimism around data centres rests on the idea that they can become flexible loads, actively supporting the grid. That claim needs to be treated carefully. Not all computing is flexible. Frontier training runs are expensive and not easily interrupted. Paid inference must respond in real time. Some workloads are, in practice, fixed. For frontier training, compute itself is scarce, and using it intermittently is wasteful.

What can flex is a narrower but still meaningful band: batch inference, synthetic data generation, training windows, redundancy capacity and non-urgent processing. That flexibility is real, although partial. This directs the design problem, which is not how to integrate fully flexible demand, but how to integrate partially flexible demand at scale.

Emerging work from operators such as Google, and research highlighted in The Grid Squeeze, suggests that even limited flexibility can be valuable. The question is not whether data centres can behave like batteries. It is whether they can behave like responsive industrial loads.

That is enough to change the system, but not enough to ignore its constraints.

Lessons from elsewhere

The world is not encountering this problem for the first time.

Ireland effectively halted new data-centre connections in Dublin in 2022 due to grid constraints. In the United States, utilities in Virginia and Texas are grappling with connection queues and substation limits. The Netherlands has imposed restrictions on new connections in congested regions.

These are early versions of the same issue: large, concentrated digital loads colliding with infrastructure not designed to accommodate them quickly.

A more recent development complicates the picture further. Faced with multi-year grid connection queues, several US hyperscalers have begun building generation behind the meter: on-site gas turbines in Texas and Virginia, and direct nuclear power purchase agreements in Pennsylvania and elsewhere. The logic is straightforward: if the grid cannot deliver firm power on the timescale the business requires, the business will bring its own. For the operator, this solves a constraint. For the system, it is a thesis-killer. A data centre that generates its own electricity is not part of the grid in any meaningful sense. It does not absorb surplus renewables, does not respond to system needs, and does not contribute to spreading fixed network costs. It is an industrial-scale island. If Australian developers follow the same path, and the incentives to do so will grow with every connection delay, the integration opportunity disappears before it can be designed for.

Keeping hyperscalers on the system will require connection processes measured in months rather than years, network tariffs that reward flexibility rather than penalise it, and priority access for facilities willing to shape their load around system needs. That is a job for the AEMC, the AER and our network businesses, and the longer it is deferred, the more the behind-the-meter path becomes the default.

The lesson is not that data centres are incompatible with the grid. It is that integration needs to be managed explicitly. Left unmanaged, it becomes a constraint problem and will inevitably lead to hyperscalers taking the self-supply route. Managed well, it becomes an opportunity. That risk changes the urgency of the integration problem.

The near-term reality

In the near term, reliability requirements mean that gas will likely play a role.

This is not a statement about long-term system design, but about deployment speed and operational certainty. Gas generation can be built and dispatched more quickly than most alternatives. For data centres requiring firm supply today, it is the most practical bridge.

That does not make it the final destination. The long-term cost competitiveness of gas relative to firmed renewables remains contested, and the risk of long-lived assets becoming embedded in the system is real.

The important point is that short-term engineering decisions should not be mistaken for long-term architecture.

The system we should build

The deeper opportunity lies in how data-centre demand is integrated.

A rigid, always-on data centre in a constrained location imposes costs on the system. A partly-flexible, well-located data centre can reduce them. The distinction should be reflected in how connections are offered and priced.

Facilities that demand inflexible supply should bear the full cost of that choice. Facilities that provide flexibility, storage or local generation should benefit from faster connections and lower charges. This is not a radical idea. It is simply the application of cost causation to a new class of load.

It also connects directly to the equity issue. If data centres are integrated intelligently, they can bring new demand onto the system, helping to spread fixed costs more broadly. If they are integrated poorly, they risk increasing network costs that are then passed through to households already struggling to carry them.

Demand is not the problem

For years, the energy debate has treated demand growth as something to be avoided. That made sense in a system where additional demand implied additional fossil generation and higher network costs. That is no longer the only model available. In a system with cheap solar, wind, storage and increasingly responsive demand, growth can be beneficial. It can absorb surplus generation, justify investment in transmission and distribution, and rebalance a system that has been under strain from stagnation.

The challenge is not demand itself, but its form. Inflexible demand in the wrong place at the wrong time will stress the system. Flexible demand, properly located and priced, can strengthen it.

The real test

The AI load shock is often framed as a risk to net zero. That is one lens, but it is not the most important one.

The more fundamental question is whether Australia can build an electricity system that matches the realities of modern demand. That means integrating distributed supply and concentrated load, pricing networks appropriately, and allowing both energy and demand to respond to physical constraints.

The boundary between producer and consumer is already blurring. Households generate and store energy. Data centres can, to some extent, shape their demand. The next system will not simply dispatch generation. It will coordinate both sides of the equation.

If we get that right, data centres will not be a burden on the grid. They will be part of how it works. If we get it wrong, we will build expensive infrastructure to serve inflexible demand while ignoring cheaper, more adaptable solutions.

The AI load shock is not something to be resisted. It is a chance to fix a system that, until now, has been quietly struggling to keep up with its own design.

Australia does not just need more electricity. It needs a more intelligent electricity system. And if we are going to host the machines that produce intelligence, the least we can do is make sure they help us build one.

Get more like this

New analysis delivered to your inbox. No spam, unsubscribe anytime.

Take care, Tony

The views expressed here are my own and do not represent those of any organisation unless explicitly stated. This is not financial or investment advice.

Sources / Further Reading

Australian Energy Market Commission. (2019). Coordination of generation and transmission investment: Access reform, directions paper. AEMC.

Australian Energy Market Commission. (2025). Energeia finds CER flexibility could deliver $45B in benefits by 2050. AEMC.

Australian Energy Market Operator. (2024). 2024 Integrated System Plan. AEMO.

Energeia. (2025). Benefit analysis of load-flexibility from consumer energy resources. Prepared for the Australian Energy Market Commission.

Google Cloud. (2023). Supporting power grids with demand response at Google data centers. Google.

Google. (2025). How we’re making data centers more flexible to benefit power grids. Google.

International Energy Agency. (2025). Energy and AI: Energy demand from AI. IEA.

KPMG Ireland. (2026). Ireland’s data centre policy reset. KPMG.

NVIDIA. (2025). NVIDIA CEO envisions AI infrastructure industry worth “trillions of dollars”. NVIDIA Blog.

Texas Tribune. (2025). Data centers are building their own gas power plants in Texas. The Texas Tribune.

Virginia Business. (2026). Dominion prepares for 70,000 MW in data center demand. Virginia Business.

Data Center Dynamics. (2024). Three Mile Island nuclear power plant to return as Microsoft signs 20-year 835MW AI data center PPA. Data Center Dynamics.

Greenberg Traurig. (2024). Challenges in the Dutch data center market. Greenberg Traurig.

Associated Press. (2024). Ireland embraced data centers that the AI boom needs. Now they’re consuming too much of its energy. AP News.

Ferguson, A.J. (2026). The grid squeeze: The $100 billion question: Can demand flexibility replace steel and wire? Battling Entropy.

Ferguson, A.J. (2026). The NEM 101: Australia’s National Electricity Market. Battling Entropy.

Discussion

Loading comments...